|

A 10x10 matrix may not have enough work to overcome the overhead expense. The amount of work performed by the threads should be significant.

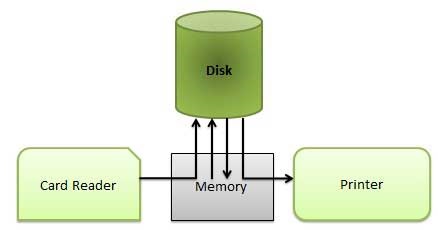

If the overhead in your thread takes more time than the execution time of the thread (such as a simple single multiplication), you may not notice any time savings from the parallel effort. The secondary processor(s) must take execution time to notify the primary processor that computation is complete and they must store the results somewhere. There is also an overhead related to signalling or communications. At some point, the primary processor needs to wait for the other processor(s) to finish. This takes execution time.Īnother item is the synchronization wait time. Minimally, the other processor (core) has to be set up to execute the thread core. Regardless of whether you have an OS or not, parallel programming has an overhead. There are many factors in performance with multiple threads or tasks. CPU for example if contains two cores/processors, it can dedicate one for this matrix and the other for all other interrupt handling and other programs, hence wait time will significantly reduce over time But, when same 100*1000 matrix is handed over to parallel processing, it is broken down into reasonably small pieces and is operated upon by possibly more than one processor. In case, a large matrix, for example, a matrix of size 100*100 is handed over to a serial processor, the processor can't process this single program but has to handle all the interrupts, all other plethora of processes and hence wait time increases in this case. When this same 10*10 matrix is operated upon via parallel processing, it'll be broken down into smaller pieces which will then be fed to each of the individual processors (keep in mind, all this breaking the matrix and handling it over to each of the parallel processors requires time) and hence, performance of parallel processing reduces over small matrices. In a small matrix, for example, take a matrix of size 10*10, serial processing is favourable because a program wouldn't need to be broken down into smaller pieces and then carried onto the serial or single processor for further processing. Than I measured the time of each operations using on the big and small matricesĪnd I noted that on the small matrices the Serial processing was faster than Parallel processing but on the other hand Parallel processing had better preformance on the big matrices. Serial calculation just calculate m3, m3. We calculate m3 row i' with different threads. To use '*' and '+' operators with parallel processing and serial processing.Ĭonsider we have m1 and m2 matrices and m3 = m1 * m2. We present simulations of these and other classic visual search phenomena, like the difference between feature and conjunction search, as well as search asymmetries.I have an implementation of a generic Matrix and I create an option It also produces linear search slopes when target-distractor similarity is elevated. The resulting model successfully simulates the typical logarithmic slopes found in human data when the target-distractor similarity is medium to low (e.g., Buetti et al., 2016 Wang et al., 2017). During this serial process, the priorities of the remaining search items are updated in parallel, in proportion to their proximity to fixation. Selected items are matched to a search template and either accepted as the target or rejected as a distractor.

Items are stochastically selected for focused attention based on Luce's choice axiom defined over their priorities. These priorities immediately begin to decay, and are refreshed based on feature similarity to the search template. Search items are assigned random priorities for attentional selection. The model uses concurrent parallel (distributed attention) and serial (focused attention) evaluative processes for inspecting items in a visual display. We present a new computational model of visual search that follows on prior theoretical work by Buetti and Lleras emphasizing the contributions of parallel peripheral processing to visual search performance.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed